Artificial Intelligence (AI) is transforming the engineering industry. From enabling powerful new capabilities, to cutting production cycles, AI is having its impact felt across a myriad of applications. However, while AI models can drive greater levels of accuracy compared to traditional methods, the complexity that comes with sophisticated models raises several issues. It poses the question: How can engineers know why their AI model is making the decisions it does, and how can they verify these results are the ones they expected?

This lack of transparency is a result of the sophisticated nature of AI models. To deal with this challenge, engineers are increasingly turning to explainable AI – a set of tools and techniques to help them understand a model’s decisions. Understanding an AI model’s decision-making process is pivotal to the continued evolution of the technology, as well as its integration into a variety of industries, in particular those with a robust regulatory framework, such as finance.

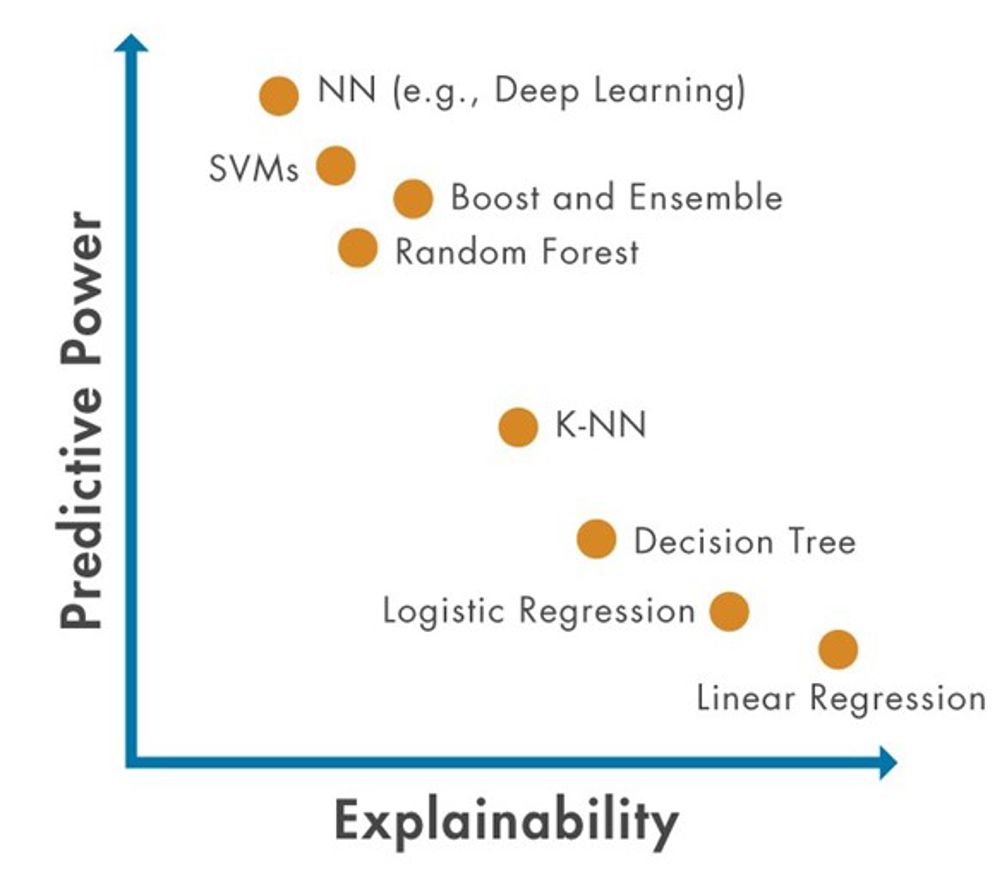

With increased predictive power, comes increased complexity

AI models need not always be complex. Models such as temperature control are inherently explainable due to a “common sense” understanding of the physical relationships in that model. As the temperature falls below a certain threshold, the heater turns on. As it rises above a higher one, it turns off. It is easy to verify the system is working as expected based on the temperature in the room. In applications where black box models are unacceptable, inherently explainable models may be accepted if they are sufficiently accurate.

Moving to more sophisticated models allows engineers to improve predictive power. Complex models can take complex data, such as streaming signals and images, and use machine learning and deep learning techniques to process that data and extract patterns that a rules-based approach could not. In so doing, AI can improve performance in complex application areas like wireless and radar communications in ways previously not possible.

What are the advantages of explainability for engineers?

With complexity comes a lack of transparency. AI models are at times referred to as “black boxes,” with complex systems providing little visibility into what the model learned during training, or if it will work as expected in unknown conditions.

Explainable AI aims to ask questions about the model to uncover their predictions, decisions, and actions so engineers can maintain confidence that their models will work in all scenarios, even as their predictive power (and therefore complexity) increases.

For engineers working on models, explainability can also help analyse incorrect predictions and debug their work. This can include looking into issues within the model itself or the input data used to train it. By demonstrating why a model arrived at a particular outcome, explainable techniques can provide an avenue for engineers to improve accuracy.

Stakeholders beyond model developers and engineers are also interested in the ability to explain a model, their individual needs shaped by their interaction with the application. For example, a decision maker would want to understand, without getting into the technical explanation, how a model works; while a customer would want to feel confident that the model will work as expected in all scenarios.

As desire to use AI in areas with specific regulatory requirements increases, the capacity to demonstrate fairness and trustworthiness in a model’s decisions will grow in significance. Decision makers want to feel confident that the models they work with are rational and will work within a tight regulatory framework.

Of particular importance is the identification and removal of bias in all applications. Bias can be introduced when models are trained on data that is unevenly sampled and could be particularly concerning when applied to people. Model developers must understand how bias could implicitly sway results to ensure AI models provide accurate predictions without implicitly favouring particular groups.

Current explainability methods

To deal with issues like confidence in models and the introduction of bias, engineers can integrate explainability methods into their AI models. Current explainable methods fall into two categories – global and local.

Global methods provide an overview of the most influential variables in the model based on input data and predicted output. For example, feature ranking sorts features by their impact on model predictions, while partial dependence plots chart a specific feature’s impact on predictions across all its values.

Local methods like Local Interpretable Model-agnostic Explanation (LIME), provide an explanation of a single prediction result. LIME approximates a complex machine learning or deep learning model within a simple, explainable model in the vicinity of a point of interest. By doing so, it provides visibility into which of the predictors most influenced the model’s decision.

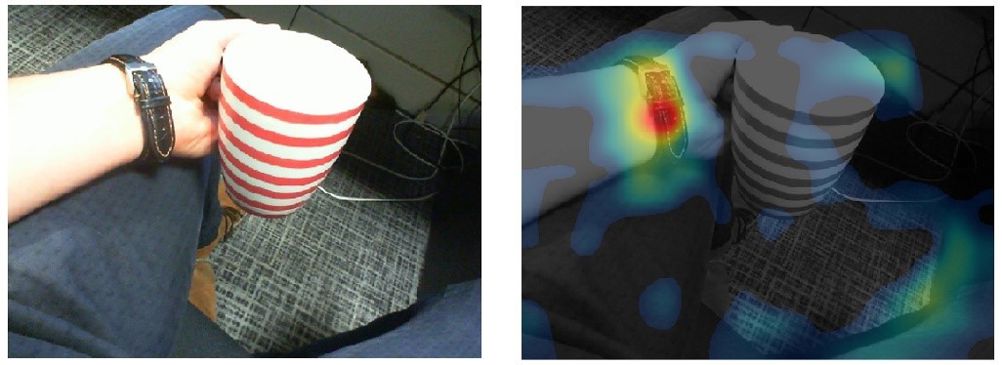

Visualisations are another robust tool to assess model explainability when building models for image processing or computer vision applications. Local methods such as Grad-CAM identify locations in images that most strongly influenced the prediction of the model, while global T-SNE uses feature groupings to display high-dimensional data in a simple two-dimensional plot.

What comes after explainability?

While explainability may overcome stakeholder resistance against black box AI models, this is only one step towards integrating AI into engineered systems. AI used in practice requires models that can be understood and constructed using a rigorous process, and that can operate at a level necessary for safety-critical and sensitive applications. For explainability to deeply embed itself in AI development, further research is needed.

This is shown in industries such as aerospace and automotive, which are defining what safety certification of AI looks like for their applications. Traditional approaches replaced or enhanced with AI must meet the same standards and will only be adopted by proving their outcomes with interpretable results. Verification and validation research is moving explainability beyond confidence that a model works under certain conditions, to instead confirm that models used in safety-critical applications meet minimum standards.

As the development of explainable AI continues, it can be expected that engineers will increasingly recognise that the output of a system must match the expectations of the end user. Transparency in communicating results with end users interacting with these AI models will therefore become a fundamental part of the design process.

How does your application benefit from explainability?

Explainability will be a key part of the future of AI. As AI is increasingly embedded into applications both every day and safety-critical, scrutiny from internal stakeholders and external users is likely to increase.

Ultimately, viewing explainability as essential benefits everyone. Engineers have better information to debug their models and ensure that output matches their intuition, while also gaining more insight into their model’s behaviour to meet compliance standards. As it evolves, AI is likely to only increase in complexity, and the ability of engineers to focus on increased transparency for these systems will be instrumental to its continued use.

Johanna Pingel is AI Product Manager at MathWorks

IET sounds warning on AI doll trend

I agree that we need to reduce cooling water demand for servers. And yes, generative AI consumes a large amount. But what about BitCoins? Their...